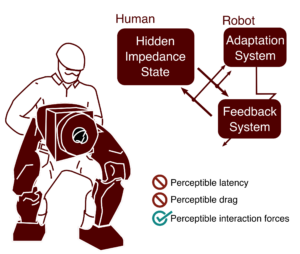

Advances in lightweight, backdrivable robots are providing wearable and other physically-interactive robots with the physical capability to assist in everyday life. As for control, from assistive lower-body exoskeletons to interactive co-bot arms, today’s robots can leverage knowledge of the task (e.g. walking or assembling furniture) to make assumptions about what the operator wants and will do. Thus, they avoid the perceptible latency and drag that have come to be associated with the earlier paradigm of direct control. In direct control, user intent was inferred through sensing interaction forces and robot acceleration. Control was applied to directly assist user intent (regardless of task). And, in order to guarantee safe human–robot interaction, controllers would conservatively remove energy to prevent operator-induced oscillation. This resulted in complaints about perceptible drag in addition to the perceptible delay between attempted motions and the feeling of assistance. Thus, control approaches today are willing to limit themselves to a limited set of pre-defined tasks in order to infer user intent in other ways. However, the Human-Empowering Robotics and Control (HERC) Lab in the Mike J. Walker ’66 Department of Mechanical Engineering at Texas A&M University is aiming to leverage recent advances in human modeling, human–robot interface design, and multi-contact impedance control to overcome these limitations of the direct control paradigm.

What is Direct Control of Physically Interactive Robots?